|

Conor Mc Gartoll I'm a graduate student in the Department of Computer Science and Engineering at UCSD, researching generalizable manipulation policies for all embodiments (humanoids, static manipulators, etc.) in Xiaolong Wang's Lab. I was previously a Software Engineer for the Powertrain Data and Automation Team at Lucid Motors in the San Francisco Bay Area. I completed a B.S. in Mechanical Engineering with a concentration in Computer Science at UCLA, where I worked on surgical robotics research in the Mechatronics and Controls Lab advised by Dr. Matthew Gerber and Prof. Tsu-Chin Tsao. Email / X (Twitter) / Patent / Github / LinkedIn |

|

InterestsI'm passionate about robot learning 🤖, reading 📘, climbing 🧗, and surfing 🏄♂️! If you love reading/learning like me, but often find yourself losing track of articles, podcasts, etc. across different platforms, check out my website the someday times! The best way to reach me is via X (Twitter) or email. |

|

["] Research/Projects

(oo) |

Wang Lab - Generalizable Manipulation (Floating Hand Policy) |

Gentle Robotics - Teleoperation Platform for HumanoidsCheck it out on @gentlerobots on 𝕏 for updates! |

CrowdBot - Crowdsourcing Quality Robot Foundational Model Training Data |

Primary (Color) Care BotDemoing at the Hugging Face Booth at 2024 Humanoids Summit!Sorting at the Summit! Great time with the Hugging Face Booth Team! Sorting Success!Successful sorting! Very robust to failed attempts! CURRENT GOAL: Allow robot to clean up several cubes all at the same time (instead of one by one)! |

Banana-Grama-BotSpelling the word NAP!Letter N -> Location 1 Letter A -> Location 2 Letter P -> Location 3 Even robust to regrabbing if first grab fails! Top down videos are shown with the image overlay used for training and fed through the model during inference. CURRENT GOAL: Allow the robot to pick any letter out of a sea of letters. Current work is on expanding this to handle multiple letters and different letter orientations! |

Low-Cost Robot Learning

3 examples of successful inference from just 50 examples! Checkout my model:

Trained with imitation learning using Action-Chunking Transformer |

Music Madness

|

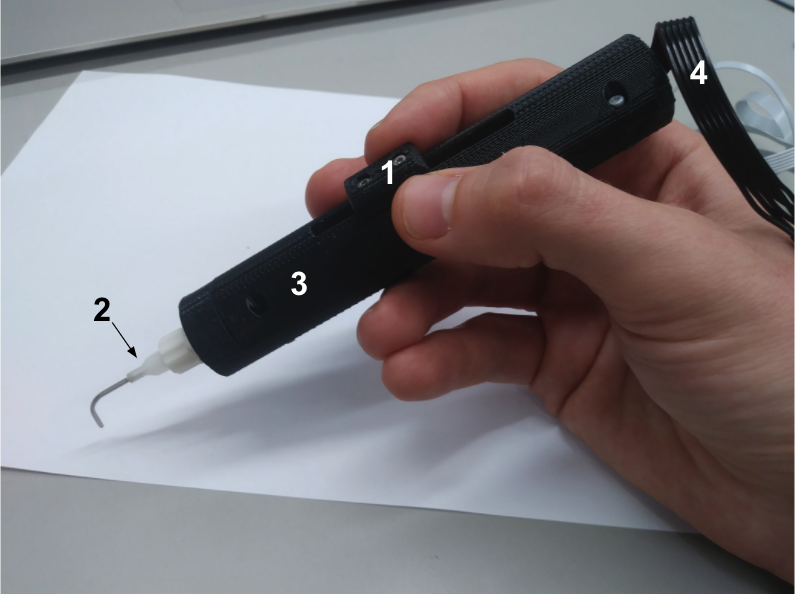

Device for Mobilizing Lens Material and Polishing the Capsular Bag During Cataract Surgery

|

|

Forked this website from this template |